The one where 3 books answer 3 questions about AI in healthcare

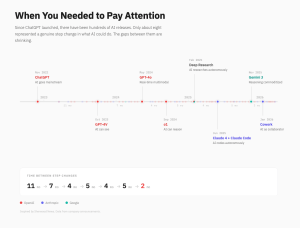

Every few years a new wave hits healthcare IT. Some reshape the shoreline; some barely ripple. Lately I’m leaning toward a simple view: Gen AI is a real step forward—but the changes that endure will come from infrastructure and incentives. In as regulated and high-attention a system as health care, the roads matter more than the horsepower.

1) Who will benefit?

History says advantage concentrates around control points—the places where compute, data, and distribution meet. In today’s terms, that means model providers with scale (e.g., OpenAI, Anthropic, Google/DeepMind), the clouds that host them (eg AWS, Azure, Google Cloud), platforms already embedded in clinical workflows (eg Epic, Oracle Health/Cerner), and companies that own the last mile to clinicians and patients (eg large health systems). As though they control the “master switch,” these players have significant influence in supporting winners and losers.

But the circle does widen. A second group tends to capture durable value: the people and teams who complement those control points—clinical data stewards, evaluation and safety engineers, product integrators who turn models into reliable steps inside prior auth, triage, charting, imaging, and revenue cycle. As individuals, entrepreneurs who see where the network is going (and get there early) tend to do well: they make the glue, the adapters, the “boring” parts that let many models work safely across many contexts and control points.

As general models compete and compute capital fuels greater availability, the answer to “who benefits” may not depend as much on who has the smartest model as we thought. In healthcare, the answer is probably closer to: “where do you sit relative to distribution and standards?”

2) How will they benefit?

Consider what happened when shipping settled on a standard metal box—a 20- or 40-foot container with identical corner fittings. That one decision let cranes, ships, trains, and trucks handle cargo without repacking. Risk fell. Costs fell 90%. And the work moved: less muscle on the pier; more planning and throughput management at distribution centers and rail yards. Jobs followed the flow.

AI may trace a similar trajectory. As models ship in consistent packages—stable interfaces and licenses, companion evidence (safety & efficacy, provenance, evaluation coverage, known hazards)—risk drops across the chain. This is a chain, though, of bits and not atoms. Workflow behavior—increasingly digital—and model swaps achieve more predictable outcomes. Capital becomes willing to fund further scale because components are modular, productized, and auditable.

As for the work, I expect this to move from first-pass busywork to the “inland” roles that plan, do, study, and act towards the learning healthcare system. Technical roles for platform/orchestration, evaluation & red-team, data lineage & governance, enablement & change will blossom. Existing roles will morph. For example, on the admin side, copy-paste, phone calls, and status-chasing give way to flow coordination, exception desks, audit/QA, and patient navigation (think schedulers → access-ops coordinators; coders → utilization & compliance analysts). On the clinical side, keystrokes give way to judgment—ambient draft review, rare-case adjudication and roster reviews, care-plan design, patient counseling, and system-of-care safety & stewardship. And on the patient/caregiver side, the role shifts from passive data source to co-steward of context; from recipient of transactions to more of a navigator. There will be more need for controlling consent and sharing, supplying high-signal inputs (PROMs [patient-reported outcomes], home-device streams, life context, and values), correcting records and attaching verifiable documents, flagging errors or preference mismatches, and (for caregivers) supporting therapy reconciliation, adherence, and follow-up.

3) What is our responsibility?

I see two obligations emerging from this and running in parallel.

First: transparency as a design choice. The industrial age didn’t compound due to the steam engine alone; it compounded because we paid for disclosure. Blueprints were made public, and then builders turned them into businesses. In AI, the equivalent is releasing portable, trustworthy manifests with every meaningful update—lineage, test coverage, failure modes, guardrails—so others can evaluate, integrate, insure, and, when appropriate, improve. Procurement and reimbursement will prefer systems that come with real evidence as much as this has become the case for conventional therapeutics (ie medications) in trial and pharmacovigilance.

Second: we have to uplift the people, not just the pipes. Standards don’t only move information; they move jobs. Containerization made ports safer and faster, but it also displaced longshoremen and pushed opportunity inland. Healthcare will feel a similar migration as routine drafting and triage shrink. We will move only as fast as we develop the workforce a glide path. This looks like making the new roles visible; creating portable credentials for evaluation, operations, governance, and enablement; and retraining with intentionality. We won’t succeed if we think the players are frozen. We need to help the team skate to where the puck is going.

Bringing it together

If advantage tends to form at the control switches, and if standards are what turn demos into networks, then the next phase winners are 1. the builders who make AI reliable, swappable, and evidenced and 2. the organizations that invest in the people who run that learning system well. Gen AI “thoughtpower”—like the horsepower that came before—opens the door. The plumbing and the social compact (transparency + worker mobility) portends our trip through it.

Want to go deeper?

These ideas are an amalgam of a few books that have been highly influential in my own thinking. Please let me know if you have encountered others for this collection!

Tim Wu, The Master Switch — Why open eras often consolidate around control points, and what that means for innovation and competition.

Marc Levinson, The Box — How a universal container standard reshaped costs, jobs, and geography—useful for thinking about AI packaging and “inland” roles.

William Rosen, The Most Powerful Idea in the World — The case that incentives for disclosure (the patent bargain) made progress compound—and why entrepreneurs matter for carrying blueprints into the world.