Since ChatGPT launched in November 2022, there have been hundreds of AI releases. If you tried to follow all of it, you drowned. If you ignored all of it, you missed something important.

I wanted to find the middle ground — to identify the moments where the capability ceiling seemed to jump, at least for me personally. These were genuine step changes that required me to update my mental model of what was possible and led to a lot of experimentation.

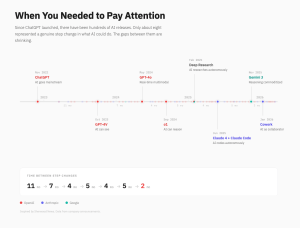

By my count, roughly eight qualify. ChatGPT made AI conversational. GPT-4V gave it eyes. GPT-4o made the interaction real-time and multimodal. o1 showed it could reason through multi-step problems. Deep Research meant it could investigate a question autonomously for hours. Claude Code meant it could write and maintain real software — not snippets, but systems. Gemini 3 commoditized reasoning. And Cowork made AI a genuine collaborator in professional-like workflows (I’m looking forward to when it’s available at work).

Reasonable people could draw the line differently. But when I laid them out on a timeline, the pattern that emerged is instructive: the gaps between step changes are compressing. Eleven months between the first two. Then seven. Then four. The most recent — two months. Part of what’s driving the acceleration is that there are more serious entrants now — OpenAI, Anthropic, Google, and others are effectively passing the ball to each other, each leap prompting the next within weeks. My own usage reflects this — I started with ChatGPT, moved to Gemini for a stretch, and now spend most of my time with Claude. I expect that will keep shifting as the capability lead changes hands.

In healthcare — where carbon is harder than silicon and the opinionated systems we built over the last decade are deeply entrenched — the compression is especially disorienting. We spent fifteen years deploying EMRs. AI is forcing us to reimagine what we do with them on a timeline measured in months, even if at the same time, things may move a little more slowly.

I don’t know how long the cadence keeps compressing or at what point it will feel that things have plateaued, but I hope the visualization helps to separate signal from noise.